The Adeptus Mechanicus Bootcamp: A Gentle Seduction

It's been over a year since I stopped writing my code, and I have such sights to show you.

I am a Principal Engineer. I have been programming computers since the last millennium, in the tech industry for over a decade, and have the requisite “ivy league” Bachelor’s of Computer Science. I have written virtual memory, a file system, malloc, and a shell from scratch, and used continuation-passing style in production. “10x engineer” is the term. So when I tell you that it has been a year since I stopped writing my code and that the current frontier models make me look like an idiot child, I want you to understand my full meaning.

March 14th, 2025 was the day I stopped writing code. I still ship code - more than ever, in fact - but I don’t write it anymore.

It’s just about three months since I stopped looking at the code.

This is a very different world than I could have possibly imagined, come to pass (and still going) faster than I could have guessed, and it’s only been a year!

The skills you need in this world aren’t prompting. They aren’t “AI literacy.” They are the engineering judgment I already had, applied at a higher altitude. But there’s a catch: if you don’t have the judgment, the altitude will kill you.

Welcome to the Mechanicum, developer. It’s time to skill up!

The Power Continuum#

Recently a coworker asked me, in a Q&A in some AI-related meeting, what vibecoding actually was. Paraphrased, her question was (almost incredulous):

“Is it just… asking Claude for what you want and letting it do it?”

“Yes,” I said (hesitantly). “That’s all.”

I had been prepared for followups. I had context-wrangling wisdom to share, prompt-engineering tips to dispense. None of it was needed.

Within a week, she was demo’ing something in a team meeting that “Claude vibecoded.” She had, in fact, just asked for the thing. And gotten it.

The barrier wasn’t technical skill or technique or even knowledge - it was conceivability. The workflow wasn’t forbidden to her - it was absent from her mental model. The moment someone said “yes, that’s really all it is,” she was able to do it with the resources she already had.

Paul Graham described the Blub Paradox in 2001. You should go read the whole article to really understand the effect. But, in brief:

the only programmers in a position to see all the differences in power between the various languages are those who understand the most powerful one.

A programmer who only knows a hypothetical mid-power language called Blub can look down the power continuum and see lesser languages for what they are. But they can’t look up, because they lack the conceptual vocabulary to perceive what they’re missing. The ceiling is invisible from below.

Graham was talking about programming languages. Now that the hottest new programming language is English, the power continuum runs from “I type the code” through “I describe what code to type” up to “I describe the outcome and the code is a side effect.” Each step up that ladder is a step further from touching the implementation, and a step deeper into engineering judgment.

- 🔧 Experiential development: You write code, run it, see what happens, iterate. Hands on every surface. This is where most of us started - and where the artisan’s ambient quality loop lives. More on that shortly.

- 🧪 Test-driven development: You write the specification first (as tests), then write code to satisfy it. You’ve separated “what it should do” from “how to make it do that.”

- 📋 Spec-driven development: You write a technical specification. The agent writes the tests and the code. You’ve moved one more level up: you’re specifying intent in structured prose, and the machine handles both the contract and the implementation.

- 💡 Intent-driven development: You write a product brief. Plain prose. Soft skills. The agent handles design, specification, implementation, and verification. I have tasted of this fruit and it has opened up my eyes.

Each level requires more engineering judgment, not less. Each level moves you further from the code and closer to the intent. Having to drop down a level is a signal. Having to drop two levels is a strong signal. Needing to go back to experiential means the ritual failed - something about the specification, context, or tooling wasn’t sufficient - and you need to diagnose why before you try again.

And if your engineering vocabulary doesn’t yet include “I describe intent and the machine handles implementation,” that workflow isn’t forbidden to you; it just happens to be inconceivable to you. You couldn’t even formulate the desire to work that way, the same way Orwell’s Newspeak made thoughtcrime impossible by removing the words needed to think it.

In 2007, Charles Simonyi - the father of Microsoft Word - described exactly this destination. He called it “intentional programming”: domain experts would express their intent directly, and a “generator” would produce the code. The programmers wouldn’t write the software; they’d build the generator and then get out of the way. He was almost twenty years early. The generator he needed didn’t exist yet.

It does now.

But we’re not ready to hand over the keys! For a short while yet, engineers will run the generator and hand a product over to the customers. The generators are still a little persnickety and can be challenging to wrangle, and the translation of intent into code - anything still on the power continuum rather than sitting at the end of it - still benefits from everything engineering teams have learned how to do. Code goes first - but not alone! By the time the generators arrive in the other domains of knowledge work, they’ll be so good that the engineers won’t have to play Tech-Priest intermediary anymore.

So don’t worry about the future! But if you’re a software engineer, today, there are some key skills that’ll help you keep pace as the field ascends the power continuum.

The Skills#

So what does it take to operate at the top of this continuum? Three things. All of them manifest in “AI doesn’t work” complaints when they go wrong, properly retortable with “Skill Issue.”

Skill 1: Asking the Right Questions#

My responses are infinite. You must ask the right questions.

This sounds like a prompting tip. It isn’t. The skill isn’t in phrasing the prompt well - it’s in knowing what to ask, which presupposes deep domain expertise. The Principal Engineer who stopped writing code still needs to think like one. The models are better than me at producing code. They are not (yet!) better than me at knowing what code should exist or what behavior it should exhibit.

Consider the Rust rewrite of SQLite that benchmarked at roughly 1,800 to 20,000x slower on key lookups than the original C implementation. There’s a well-known axiom in manufacturing and contracting, a variant of the “good, cheap, fast, pick two” constraint: “Anything unspecified will be done to the bare minimum quality required to fulfill the contract.” The critics saw this and concluded that LLMs produce plausible code, not good code.

They’re right but they’ve buried the real load-bearing insight way below the catchy headline: you get to, nay, must, define what “plausible” is:

The tool is at its best when the developer can define the acceptance criteria as specific, measurable conditions that help distinguish working from broken. … The vibes are not enough. Define what correct means. Then measure.

SQLite’s TH3 test suite is proprietary. The reimplementors couldn’t have had access to the full behavioral specification, including performance constraints. So those constraints went unspecified. And anything unspecified gets done as cheaply as possible. The model did exactly what was asked; the model was not the problem. The specification was the problem. Skill issue.

The Artisan’s Ambient Loop#

In Desire Makes Artists, I wrote about how pre-industrial artisans produced goods with art infused during the process, because that’s what happens when humans make things by hand. The craftsperson’s quality wasn’t exclusively from intentional specification - it was also emergent from proximity. You’re in there, hands on every surface, spending an hour doing minute scrollwork on the side of a flintlock rifle, say. If you notice that one of the plates is a little loose, you fix it - that’ll take a minute or two and you’re already locked in for an hour. And the whole project has been a days-long undertaking and you don’t want your beautiful thing to be a piece of garbage - of course you’ll fix it. Rinse and repeat. The ornamentation was a signature of the real value: hours of incidental contact during which the artisan was continuously, unconsciously matching intended behavior against actual behavior. The constant contact let the creators discover and fix problems that were never formally specified, and so the customers never had to overthink the specification.

Industrialization didn’t just remove the decoration; it removed that ambient inspection loop. Now you get exactly what you specified, and everything unspecified is up in the air. The factory worker doesn’t have your context and isn’t spending but a passing moment in contact with the widget - whose intended behavior they may not even know.

Sound familiar?

The specification spectrum has three failure modes:

- Underspecify: You get “plausible.” The Rust SQLite rewrite. 1,800x slower because nobody said it shouldn’t be. Everything unspecified gets done as cheaply as possible.

- The artisan sweet spot: The ambient loop catches what formal specification misses. Beautiful. This is the old world. This is where typing your own code lived. The technique doesn’t scale.

- Overspecify: You burn all the human bandwidth on definition and ship nothing. This is the Load-Bearing Rate Limiter problem inverted: instead of the human bottlenecking production, the human bottlenecks definition. You’ve gold-plated the spec instead of the code and you’re just as broke. You spent four days iterating on a pull request that adds one button because you future-proofed it against quantum computing, ensured optimal Big-O complexity, and ran out the payroll budget before you shipped a feature that could bring in any revenue.

We don’t need code inlaid with ornate scrollwork, but we do need some mechanism to catch what we forgot to specify.

The skill is finding the right altitude on that spectrum per task. Performance-critical paths need tight specs. Internal tooling needs a product brief and a prayer. Knowing which is which before you’ve burned the time finding out is engineering judgment. Same as it’s ever been.

Skill 2: Context Wrangling#

If you know the specification, you have to make sure the machine knows it, too. Your human brain has a ton of assumptions in context at any given time that factor into that knowing, and a ton of implicit associations that will be considered when needed.

You have to provide those in a form the machine can understand. This is filling the agent’s context window with the right stuff in the right way. This is, for now, as much an art as a process.

- Know what the machine knows: The cheapest context is the one you don’t have to pay for; the models have a lot of “intuitive” knowledge. Don’t waste space repeating it and certainly don’t try to fight it.

- Know what the machine doesn’t know: The model can’t infer your architecture from vibes. The model can’t read your mind about which edge cases matter. The model can’t read your teammates’ minds to know how-detailed a pull request description they’ll actually read. All of these things are things that you and your fellow humans would eventually pick up, internalize, and file away in your brains at the right distance from your work so that they kick in when needed. You have to make these explicit, so that they aren’t unspecified.

This is the practical, mechanical skill. What goes into the machine’s context window, what stays out, and when. I’ve written about this at length, but these are by no means exhaustive, nor necessarily going to stay relevant, so don’t sweat about reading them all and certainly don’t try to do them all:

- Stop Doing AGENTS.md

- .gitignore is not .agentignore

- Model Context Protocol, Not Agent Context Protocol

- How I Learned to Stop Coding and Love the Machine # Cursor

The core of the skill is literally imagining what the machine is going to see when it starts working on your problem. What does it know? What doesn’t it know? What assumptions is it going to make that are wrong? That’s context wrangling, and it’s the same skill you use when you write a design doc for a new teammate: you’re modeling someone else’s mental state and filling in the gaps.

Skill 3: Discernment#

To know what you know and what you do not know, that is true knowledge. – Confucius

The third skill is knowing when to stop. Knowing whether the thing should exist at all. The cost-benefit analysis synthesized across time, money, quality, and market absorption, and the rest of the constraints that matter to you.

The intuitive response to unprecedented productivity is “do everything faster.” The correct response is almost the opposite. – The Load-Bearing Rate Limiter Was Human

Jeremy Howard at fast.ai wrote about dark flow - the seductive trap of agentic productivity. You can build so much, so fast that you build things you didn’t need. The flow state of commissioning is just as intoxicating as the flow state of coding, but the blast radius is larger because the output rate is higher. You can burn through a year’s token budget in a week if nobody’s asking “should we?”

Steve Yegge’s AI Vampire lives in the dark flow: the vampire feeds on your productive energy and eventually burns you out - not from typing, but from the cognitive overhead of steering. If you try to overclock the human in the loop, the loop falls apart. The vampire doesn’t care that you’re the smartest engineer in the room: it’ll drain you just the same.

Tom Wojcik raised the concern that outsourcing coding to AI causes a kind of “Digital Dementia” - your skills atrophy as you stop practicing them. He’s observing something real, and some research supports that observation at face value.

The framing is wrong. Measure horsemanship skills in millennials and I bet you’ll find that they, as a population, are terrible. Sound the alarm!? No. Horses were obviated. The correct metric isn’t “can you still hand-write a merge sort.” The correct metric is “can you effectively commission, verify, and steer the thing that writes merge sorts.” Or better yet: can you determine when you even need to be sorting in the first place? That’s discernment operating at the product-intent level - a floor above the implementation question - and entirely invisible to anyone measuring typing speed or even code comprehension. Note that you still need to understand sorting - you just don’t need to be doing it yourself. In fact, you’d probably better not be!

Many folks aren’t measuring the right things yet because they’re still on the lower rungs of the power continuum and can’t look up.

Discernment’s criticality also scales with blast radius.

- Small: you overspecified a button and wasted an afternoon.

- Medium: you underspecified a library rewrite and got an 1,800x performance regression.

- Large: at the top of your delegation tree, an undiscerned intent propagates through every node below you. You blow your series B funding in a month on a dozen dead ends.

The higher you climb, the more discernment matters.

For Those With Eyes to See, Let Them See#

You’ve got the skills. You’re asking the right questions, feeding the machine the right context, and exercising discernment about what to build and when to stop building. Now what?

You scale.

You can’t overclock a human. You’ve got these new tools and you will never code as fast as them, so don’t try. Instead, look around.

Look up at your current manager. They have been doing exactly this: managing through indirection. Not just you, but probably several people just like you. They have a meeting, express what they hope y’all will do, and then check back in later, praying that you actually did what they wanted. That’s what being a manager is. They set intent, provide context, exercise discernment about what their reports should work on, and review the output. The three skills, applied to humans.

Your job is becoming their job. But instead of the traditional path of stepping up and replacing them, look down at your own hands.

Your hands used to be where the production pipeline ended. Now they’re where delegation begins.

The Gas Town Ladder#

- Stage 1: Zero or Near-Zero AI: maybe code completions, sometimes ask Chat questions

- Stage 2: Coding agent in IDE, permissions turned on. A narrow coding agent in a sidebar asks your permission to run tools.

- Stage 3: Agent in IDE, YOLO mode: Trust goes up. You turn off permissions, agent gets wider.

- Stage 4: In IDE, wide agent: agent gradually grows to fill the screen. Code is just for diffs.

- Stage 5: CLI, single agent. YOLO. Diffs scroll by. You may or may not look at them.

- Stage 6: CLI, multi-agent, YOLO. You regularly use 3 to 5 parallel instances. You are very fast.

- Stage 7: 10+ agents, hand-managed. You are starting to push the limits of hand-management.

- Stage 8: Building your own orchestrator. You are on the frontier, automating your workflow.

Yegge’s figure 8 shows a manager, but figure 8 isn’t the ceiling. Yegge was narrating the buildout and operation of the Gas Town system, not describing the steady-state organizational structure that emerges from its presence in the world.

Recursive Delegation#

Here’s the insight that the Gas Town progression implies but doesn’t fully explore: figure 8 is just where individual contribution ends and recursive delegation begins.

You will reach the point where you have too many direct-report agents. There is a limit to effective span of control - this is why your manager has a manager. The solution is the same one every organization in history discovered: hierarchy. You don’t manage ten agents. You build a managing agent for your direct reports, and you dialogue with that manager. Just as your manager talks to you, and their manager talks to them.

Then the process repeats. Maybe you’re managing two managers, each of whom manages five individual-contributor agents. Maybe you add a layer and it grows, expanding ever downward until you’ve scaled your production capabilities to the level you actually need.

The structure itself is not new; this is exactly how basically every company ever has organized itself, because it works. Maybe a pure-machine world will discover something better, but for now - while we are still hybrids, still cyborgifying our workflows - this is the structure that works. We see it working today, as it has for centuries.

The difference: traditionally, every node in that org chart had to be a human and most nodes were not given the combination of legal, regulatory, and budgetary allowance to build their own subtrees of direct reports. But if you have access to LLMs or token budgets at your job today - congratulations. You do have that allowance. It’s time to get hiring!

The Ceiling Is Discernment, Again#

We are not going to do this recklessly, though, because we paid attention at the Adeptus Mechanicus Bootcamp. We will not slip into dark flow. We will not get drained by the AI vampire.

The blast radius at the top of the hierarchy is enormous, and now every node can sit at the top of a hierarchy! An undiscerned intent can propagate and multiply at speeds and scales previously unimaginable. The three skills apply at every level of the tree. They don’t get easier as you ascend, but they do get more consequential.

Where We’re Going, We Don’t Need Eyes#

In manufacturing, a lights-out factory runs with no human presence on the floor. The machines operate in the dark because there is nobody there who needs to see.

The lights-out codebase is the same idea applied to software. Code that is authored, tested, reviewed, and deployed without a human ever seeing it. You specified the behavior. The machines delivered it. The tests pass. The customers are happy. Why would you need to look at code? And, why would you risk letting a human touch it?

Perhaps this sounds terrifying. It should - a little. That’s the appropriate amount of respect for

The Event Horizon#

You can’t get to a singularity without passing through an event horizon, don’t you know.

Geoffrey Huntley calls it the “oh fuck” moment. He sent Cursor off to port a Rust audio library to Haskell, took his kids to the pool and came back to a working library. It wasn’t regurgitating StackOverflow - it was creating something new, from intent alone. Jaw on the ground.

Every engineer on this journey has that moment. The moment the capability becomes real - viscerally, not intellectually. That’s the event horizon.

And if it’s not behind you yet, it will be soon.

The Principal Engineer who hasn’t written code in a year and hasn’t read it in nearly three months - that’s not a thought experiment. That was the opening to all this. That’s my past.

The Bootcamp#

The frontier models make me look like an idiot child at typing code. They always will. The value of that skill is gone and it’s never coming back.

But the models can’t do what got me here, yet. The questioning: knowing what should exist and how to ask for it. The context wrangling: knowing what the machine needs to know that it doesn’t know yet. The discernment: knowing when to build, when to stop, and when to never start.

Those are engineering judgment. Those are the skills that let me get as far as Principal Engineer in the first place. And if you still can’t get these tools to work for you, consider the view from the street: the Model Ts are everywhere. Ford has been cranking them out day after day, week after week. You can see the cars. You can see the factory. You walked in off the street, tried to use one of the machines, failed, and concluded that assembly lines don’t work, while the streets fill with cars. The code is shipping. If you can’t get the machines to work, that is definitely a you problem. Skill issue.

The skill is management. Programmers, historically, do not have strong management chops. The ones who do tend to go into management. The ones who’ve been writing code for a decade or two often explicitly opted out of that track. The field just showed up at their desks and told them their job is management now. No wonder they’re struggling. But the fastest way to learn management is to not realize you’re learning it.

Nothing can escape the event horizon, so you might as well learn the skills that let you thrive on this side of it.

Welcome to the Mechanicum, developer. Let’s get to work.

Your Harness#

Use Cursor. I’m recommending it explicitly, and specifically, for two reasons.

First, it lets you watch. The agent’s work is visible in the editor. You see files open, code appear, tests run, errors get diagnosed. You ride along. The same IDE style you’re used to touching, just, coming alive underneath your fingers. This is critical: we are deliberately staying head-full, not headless. You are going to watch and learn before you trust and delegate.

Second, it supports Niko directly.

The Problems#

If you’ve tried agentic coding and gotten burned (or know someone who has), the story probably hit some combination of these:

- 🗺️ The agent dives straight into coding without understanding the problem.

- 📋 The agent doesn’t understand your project and makes bizarre architectural choices.

- 📜 The context window fills up, the agent forgets what it was doing, and you lose work.

- 🧠 Every new conversation starts from scratch; nothing is remembered. You have to correct the same mistakes over and over again.

- ✅ The agent ships broken code confidently, or gold-plates something nobody asked for.

- 🔁 One failed attempt and the whole thing derails; you have to start over.

These are all solved problems. Let me introduce you to one of the solutions.

But first, a disclaimer: The specific solution does not matter. Everything you read here will be obsolete in two years, probably in one. But today, here’s a solution that’ll help you speedrun through Steve Yegge’s stages and achieve a significant productivity boost with minimal skill atrophy along the way. Executed properly, that ought to give you a significant leg up on optionality as you navigate the next few years.

Niko#

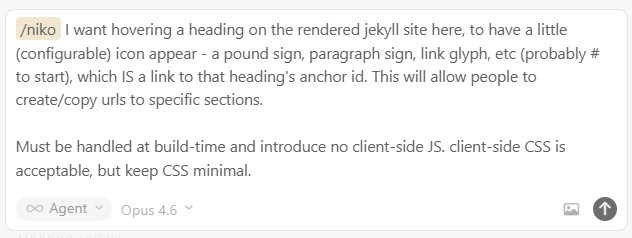

Niko is a structured agentic workflow system that lives in your repository as a set of Cursor rules. It transforms your AI coding agent into something resembling a senior colleague with a rigorous process. Install it with ai-rizz:

ai-rizz init https://github.com/texarkanine/.cursor-rules.git --commit

ai-rizz add ruleset niko

Then, in Cursor’s chat interface, in Agent mode, type /niko followed by what you want to build:

Here’s what happens, and here’s how it solves each of those problems.

🗺️ Planning#

Niko doesn’t jump to code. It analyzes your request, determines the task’s complexity (Level 1 through 4, from quick bugfix to multi-milestone system change), and plans before building. For Level 2 and above, Niko produces a concrete implementation plan: specific files, specific functions, specific test cases, sequenced in dependency order. For Level 3 and above, if the design is genuinely ambiguous, Niko enters a creative phase to explore options and make a reasoned decision before committing. Niko only comes up for air to ask you a question if the answer is not obvious.

Once made, the plan is written to disk so it survives across sessions.

📋 Context initialization#

The first time you run /niko in a project, it scans your codebase and creates persistent files in a “memory bank”:

-

productContext.md: what this thing is and who it’s for -

systemPatterns.md: how the architecture works and what’s non-obvious -

techContext.md: the stack, the tools, the commands

These persist across tasks. Every subsequent session starts with Niko reading these files and orienting itself. This is your AGENTS.md but better - created explicitly in alignment with those best-practices.

📜 Context exhaustion#

When Niko begins a task, some additional ephemeral files are created in the “memory bank”:

-

projectbrief.md: the user story and requirements -

activeContext.md: the current task and phase - the key points of a context window -

tasks.md: the checklist of work to do -

progress.md: the history of completed work and phase transitions

When Niko finishes a phase and it’s time for your input, you close the context window and open a new one before running the next /niko-* command. Niko reads the memory bank from disk and picks up where it left off. Clean context, full awareness. This is what makes context windows cattle, not pets: disposable and replaceable, because the state that matters lives on disk, not in the window.

This also forces the system to prove, every time, that progress is actually being persisted externally. There’s no tolerance for sloppiness, because failing to save state properly means that the workflow can’t progress.

🧠 Memory and reconstruction#

State is saved to disk and to git history. The files on disk give the agent current state; the git history gives reconstruction of progress over time. progress.md is purely additive: every phase completion appends a new entry; this is the why of the history. An agent with an empty context window can read the files, diff the code against the last recorded state, and reconstruct where they are in the process.

This solves compaction: Key info is uncompressed on disk, available when needed.

This solves capability degradation at fuller context windows: You can just throw a full window away at any time with minimal churn and no appreciable change in behavior - since it was always running this way.

This solves the “agent went off the rails and ruined everything” problem: each phase transition is a git commit; rollback is just a git revert away.

✅ Validation#

Before building starts, Niko runs preflight checks on the plan, validating it against your actual codebase & user stories for convention conflicts, dependency impacts, completeness gaps. After building, Niko runs QA: a semantic review checking for KISS, DRY, YAGNI violations, incomplete implementations, debug artifacts, and regression against established patterns. Both phases gate forward progress. If QA fails, Niko loops back to build. If preflight fails, Niko loops back to plan.

Niko only comes up for air to ask you a question if there’s an issue that can’t be automatically resolved.

🔁 Resilience#

The command structure itself is a disciplined loop. Close the window, open a new one, run the next command. If an attempt fails, the memory bank has the record of what was tried and what went wrong. Niko’s /refresh command performs a systematic re-diagnosis of implementation struggles: discard previous assumptions, map the system, hypothesize broadly, investigate with evidence, then fix. You’re never truly stuck because you’re never more than one clean context window away from a fresh start with full history.

🧠 Archival#

After the build ships and QA passes, Niko reflects. Did the plan hold up? Were the creative decisions right? What surprised us?

Then Niko archives: a self-contained document summarizing the task, inlining all ephemeral content, and clearing the working state so you’re clean for the next task. This is permanently filed away in the memory bank in your repository for future reference, so that you can learn from past experiences and improve over time.

Try It!#

Got a task that needs some code written? Go try it now!

- Add Niko to your repository

- Open Cursor and select

Agentmode in the chat pane.- Choose Claude Opus (or similar heavyweight model; you do not need “MAX” mode)

- Run

/nikoto initialize your memory bank. - REVIEW THE NEW FILES and make sure they look good. Hand-edit them if needed.

- Commit - Niko’s ready.

- 😺 In a new context window, run

/niko <describe what you want>- Choose Claude Opus (or similar heavyweight model)

- watch

- Read the Reflection document Niko writes at the end.

- Open a pull request

-

/niko-archive(if applicable, see below) - Merge the pull request

- GOTO 6 😺

Operational Notes#

Niko’s README covers usage well, so I’ll just mention some of “seasoning to taste” personal preferences that aren’t in the docs.

Model Management: To echo the name of a feature that Claude Code shipped ages ago: “Opus for Plan Mode!” always use the most-powerful model you can, when doing either:

- initial Memory Bank setup

- Starting a task that you don’t already know is simple

You want the heavyweight reasoning and thinking when forming plans. Garbage in, garbage out! For level 3 tasks where Niko stops after planning to give you a chance to review the plan, you may, at your discretion, judge that a lighter, cheaper, faster model will be sufficient and switch.

Similarly, if you’re manually entering /niko-reflect after a build with a simpler agent, bump back up to Opus for that, too.

You can drop to Cursor’s “Auto” model for /niko-archive, at the end.

However: “Buy once, cry once.” I have found it’s usually just better to stay with Opus throughout, and never have to deal with shoddy work that has to be reworked. On the positive side, Niko’s context-window management means you never need to pay extra for Cursor’s “Max” mode.

Reflection as PR context. I leave Niko’s memory-bank/active/reflection/ files in place when I open a pull request. The reviewer gets the agent’s own retrospective alongside the code: what was planned, what changed, what was learned. It helps head off a lot of “why did you…?” questions.

Manual cleanup after Level 1. Level 1 tasks (quick fixes) don’t have a reflect or archive phase, so the memory-bank/active/ directory doesn’t get cleaned up automatically. Once you’re satisfied with the work, delete it yourself. There’s no slash-command for this because it would be wasteful to make you type /niko-cleanup to get an AI agent to run rm -rf memory-bank/active when you could just delete the folder yourself.

When things go sideways. /refresh is for troubleshooting implementation. If Niko just can’t figure out how to get something right… that’s a /refresh situation. /refresh is special in that you usually want to use it IN the existing context, so that the full specifics of what didn’t work are available to the troubleshooting process.

/niko-creative is for exploring solution spaces. Usually this will happen automatically as part of the planning phase, but you might need it later if something unforeseen crops up. You can also use it ad-hoc, outside a workflow, as a brainstorm buddy.

Both are human touchpoints designed to help you keep things on track when the automated process struggles. Ideally, you’d never need to use them. I’ve used these to “un-stick” Niko workflows maybe four times in the last six months; Niko doesn’t usually get stuck anymore because modern models are really good.

The Progression#

So you’ve been using Niko for a while now. You’ve watched the agent plan, build, test, reflect, archive. You’ve run the commands, closed and reopened context windows, checked that the memory bank was persisted. You’ve seen the process work, firsthand, task after task.

Good job! Niko operationalizes the “agent actually doing things” part of agentic development, and forces you - through using the commands in sequence - to adhere to a process. You & Niko can go all the way to Yegge’s Stage 7 in Cursor… and there, you’ll definitely start to feel the fatigue. You can’t keep up with everything that’s going on. You can’t look at everything. You don’t need to look at everything.

It’s time to go headless and parallel.

Trust is the inflection point. Going headless and going massively parallel are two separate axes, and there’s no required order. It depends on how you’re developing and what you’re comfortable with.

Going headless usually happens locally first, either with Cursor’s CLI Agent or, much more likely, with Claude Code. Don’t worry - you can run Niko through a16n to bring it over to Claude Code. But web-based tools like Cursor Cloud Agents and Claude Code Web facilitate headless parallelism remotely. The specific solution does not matter ;).

I told you all this several sections ago. Your managers have been doing exactly this with you throughout your entire career. Setting intent, providing context, exercising discernment about what their reports should work on, and reviewing the output. The three skills, applied to humans.

But now you’ve done it with machines. Not read about it, not nodded along, not agreed in principle. You have actually managed a direct report through a multi-phase workflow, watched the process work, developed trust in the delegation, and scaled (or are ready to scale). Niko is a software engineering management training course disguised as an agentic coding tool, and the fastest way to learn management is to not realize you’re learning it.

So you’re a manager now. Congrats on the promotion!

Your New Life#

You write excellent tickets now. Acceptance criteria, edge cases, the works. At a minimum.

You’re headed towards writing really good technical briefs and then stepping back to write really good product briefs. The progression up the power continuum maps directly onto the progression through Yegge’s stages: the further up you climb from the code towards the intent, the more employees you can manage and the better your briefs need to be. You don’t need Niko anymore, though the principles you learned together will continue to serve you well.

There was a time where the models’ shortcomings made it look like we had a tooling issue. Now that the models are capable-enough, it’s plain to see: